In Jess Row’s short story “The Secrets of Bats,” a high school teacher living in Hong Kong recalls one student’s experiment in the dark hallways after school hours, her goal being to see the same way bats see, that is without actually seeing. She records her progress in a notebook as she walks down the vast acoustic chambers of the school’s basement, finding doors with her eyes shut.

It’s hardly a secret shared by bats alone. While they use the technique, known as echolocation, to navigate the night skies, some blind people have mastered this ability as well. Using simple tongue clicks or tapping the ground or walls with a cane, they can safely coordinate their way through enclosed spaces — following the echoes to determine the path ahead.

Vision is hardly the only sense necessary for people to successfully navigate, or even to gauge the concept of space. Nor is echolocation an ability exclusive to those with impaired vision. A study conducted in by researchers from the Ludwig Maximilian University of Munich, Germany, explored the mental processes of echolocation further in depth — an ability that any individual can train themselves to do. Learning to do so requires a careful coordination between both the sensory and motor cortices of the brain, associated with our sense of touch (processing what is tactile information) and movement, respectively.

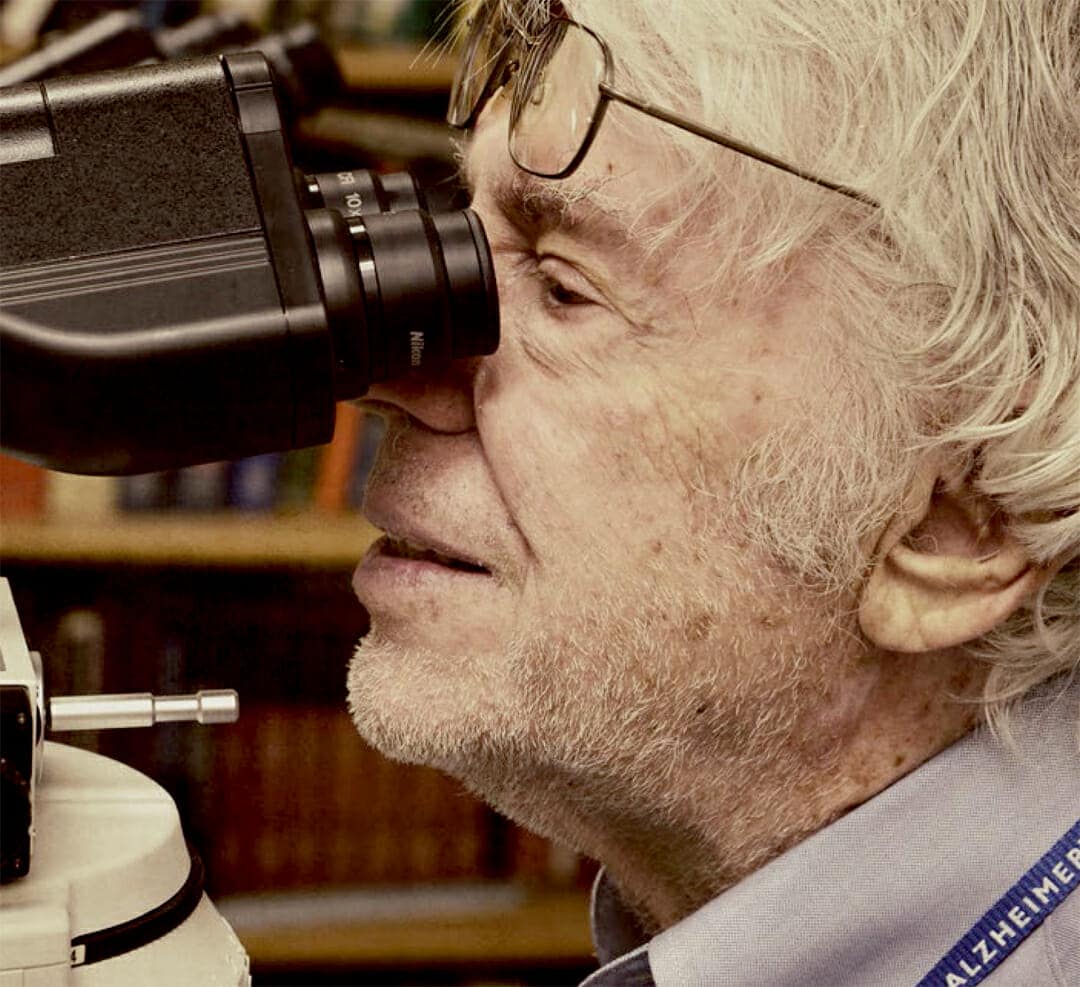

The research team, led by Dr. Lutz Wiegrebe, a professor in the biology department at LMU, and Dr. Virginia Flanagin, from the German Center for Vertigo and Balance Disorders, suggested that using echolocation sighted people can learn to accurately estimate the size of a room using a series of self-generated clicks. The researchers used functional MRI technology to monitor brain activity in the various brain regions of 11 sighted test subjects and one blind individual while they performed their assigned task. The results were published in the Journal of Neuroscience.

Wiegrebe and his colleagues found a small chapel with numerous reflective surfaces and duplicated the building’s dimensions for a computer model for the test subjects to explore. To do so, they first studied the building’s acoustics, as the chapel was chosen for its long reverberation time.

“In effect, we took an acoustic photograph of the chapel, and we were then able to computationally alter the scale of this sound image, which allowed us to compress it or expand the size of the virtual space at will,” Wiegrebe explains.

The test subjects were each fitted with small headsets — a pair of headphones and a microphone, and then placed into the MRI scanner for study. Signals sent to the headphones were used to coordinate their positions within the virtual chapel. They were instructed to produce simple tongue clicks. The echoes these clicks produced were then played back to the participants through the headphones. The pitch of each echo corresponded to different room sizes — reflecting their positions in the building. These sounds are ideal because they’re low pitch and don’t risk being drowned out by ambient noise.

From their positions — unable to actually see the virtual space they occupied — the results were quite surprising to the project participants, who quickly came to adjust to their newfound ability.

“All participants learned to perceive even small differences in the size of the space,” said Wiegrebe. The participants could more accurately determine the size of this virtual room when they actively produced the tongue clicks rather than simply listening to the ones played back to them. Despite never having visited the chapel, one of the participants was able to estimate the size of the room with just a 4 percent margin of error.

While echolocation research has been done before, the LMU experiment stands out for one important reason: The basic setup for the project allowed researchers to witness the neuronal mechanisms used by the brain for echolocation — to see what happens when we stumble around in the dark. “Echolocation requires a high degree of coupling between the sensory and the motor cortex,” said Flanagin.

Sound waves are produced by tongue clicks, which resonated in their surroundings — these bits of information are picked up by both ears, which then triggers the auditory portion of the sensory cortex, wherein we process what we’ve just listened to seconds ago. In the sighted subjects, this stimulus is then quickly followed shortly by activation of their motor cortex, which drives the tongue and vocal cords to produce new clicking sounds.

The tests carried out using the congenitally blind participant, however, revealed that when he heard the reflected sounds, the brain’s visual cortex became activated. “That the primary visual cortex can execute auditory tasks is a remarkable testimony to the plasticity of the human brain,” Wiegrebe remarked. Brain signals in the visual cortex were significantly weaker in the sighted subjects throughout the echolocation task.

The next step for the researchers is developing a dedicated training program for blind persons to fully hone their echolocation skills; using simple tongue and palate clicks and learning the distinctiveness in each echo.

According to Dr. Peter Scheifele of the University of Cincinnati, “Acoustically, according to laws of physics, it’s certainly possible to make a pulse that will tell you something about objects in front of you, such as fences, garbage cans, or basketballs.” Think of it as a lifelong skill that gets better with improvement. The more clicks and the greater the frequency, the more objects you’ll be able to perceive.

This article is updated from its initial publication in Brain World Magazine’s print edition.

More From Brain World

- Are We More Easily Distracted As We Age?

- Eating Without Thinking: Your “Food impulsivity” Brain Circuit

- Know Your Brain: The Motor Cortex — What Moves You

- Seeing is Believing: An Interview with Project Prakash Founder Pawan Sinha

- Understanding Blindsight: An Interview with Dr. Beatrice de Gelder

- You Are Your Connectome: How the Brain’s Wiring Makes Us Who We Are